Opinion: Bloomington’s next community survey needs some work

A couple of weeks ago, the city of Bloomington released the results from this year’s community survey.

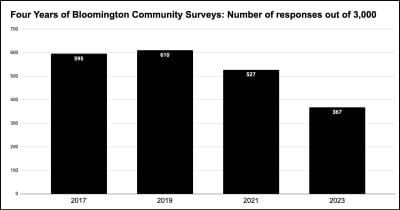

The survey has been conducted every two years for the city of Bloomington by the same firm—Polco/National Research Center. That’s now four surveys worth of data that can be tapped for trends.

Based on the city’s online financial records, Polco/NRC has been paid a total of $82,590 for the work, starting in 2017.

Surveys were conducted in 2017, 2019, 2021 and 2023.

Democratic Party primary winner Kerry Thomson, who is unopposed this fall, will almost certainly be he next mayor of Bloomington, starting in 2024.

I think it would make sense to repeat the same kind of survey in 2025. Continuing to ask some of the same basic questions every two years, using the same methodology, would add to a longer-term understanding of how Bloomington’s community attitudes are changing.

But I hope that the Thomson administration will not wait until 2025 to start thinking about that year’s survey.

There are two areas where I think improvements could be made: the number of respondents; and the quality of the non-standard questions.

If we care about the attitudes of smaller subgroups of Bloomington’s population, then they should be included in the survey in large enough numbers to measure what they think. That’s different from applying weights to their numbers so that the overall statistical picture for the city of Bloomington is accurate.

In this year’s survey, the weighted number of Black respondents was 11. That’s 11 out of 367 total completed surveys. That could be enough to say that the Black community got its proper statistical weight in the context of the whole community.

But that’s not enough to say anything meaningful about attitudes in Bloomington’s Black community.

If we care about attitudes in Bloomington’s Black community, then we need to work harder to get bigger numbers. One way would be to make the overall sample size bigger than 3,000.

Another way would be to reach out to Black residents, to encourage those who did get randomly selected as a part of the sample, to complete the survey. It’s worth noting that the overall response rate to the same survey, conducted by the same company using the same methodology, has dropped between the first two survey years and this year—from around 20 percent to around 12 percent. So there’s room for improvement on the overall response rate.

A second area that needs improvement is the wording of the questions that vary from year to year. As an example of a question that I think needs improvement, consider this one.

With the addition of the 7-Line, a separated bike lane on 7th street connecting IU’s campus and the B-Line Trail, the City of Bloomington now has a nearly continuous loop of separated bike lanes. Are you satisfied with the current bike lane infrastructure?

○ I’m satisfied

○ I would like to see more separated bike lanes

○ I would like to see fewer separated bike lanes

○ Neutral / No opinion

I am not sure what this question is intended to measure, much less what it actually measures. I think the problem with this survey item stems from the fact that the text of the question appears to ask for an opinion about one thing, but the choices relate to a slightly different thing.

The text seems to ask about satisfaction with the 7-Line as bicycle infrastructure. Another way to ask that might be: Are you satisfied with the design and implementation of the 7-Line as bicycling infrastructure?

But the choices indicate that the question is trying to measure attitudes about future investments in separated bicycle lanes generally. Asking if you want more or fewer separated bicycle lanes is a weird way to put the question. Does choosing the option for fewer require me to believe that some existing separated bicycle lanes be ripped out?

There’s also a mismatch between the grammatical structure of the item as a yes-no question, and the choices, which don’t offer yes or no as an answer.

Anyhow, the survey item about bicycle lanes needs improvement. But I think developing a better question for the 2025 community survey will take more than a few minutes of hard thinking by a jerk like me.

One approach might be to assign the task of developing some better survey questions to the relevant city board or commission. For example, the bicycle and safety pedestrian commission or the traffic commission might be recruited, to come up with one really great question about bicycling infrastructure.

But I would suggest trying out a different concept, which I think could be used, any time the creation of a new city board or commission is contemplated. If there’s a specific task to be done, which provides value to the community, then I think it’s worth paying for that value. Nothing says that the city values the work better than paying cash money for it.

If there’s a specific task that needs to be done, like designing some specific 2025 community survey question, then the city could issue a request for proposals from groups of residents who have interest and expertise in the topic.

The framework for managing these resident consultancies would need to be worked out in some thoughtful way. But there’s plenty of time between now and 2025 to give it some thought.

Comments ()